The debate about Q-Day or the moment when quantum computing is capable of violating current cryptography, moved from the theoretical blackboards to the forums and discussion spaces of the Bitcoin community.

It happened on March 31, 2026 when Google’s roadmap, which places the year 2029 as the horizon for its post-quantum migration, reactivated the analysis on the responsiveness of a network that, by design, prioritizes consensus over corporate speed.

In this scenario, a part of the community invites us to understand that Bitcoin is not at a dead end in the face of this threat. In fact, you have to look at its architecture as a digital fortress.

In this ecosystem, each “safe” with a balance is protected by a unique cryptographic code. AND The arrival of a quantum supercomputer would not imply the collapse of the walls of the fortress, but the obsolescence of the current security combinations, as pointed out by the economist Saifedean Ammous.

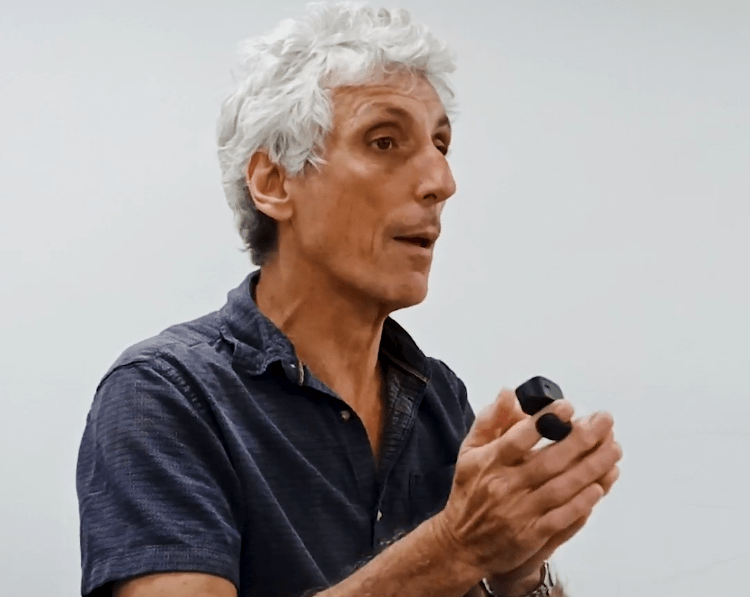

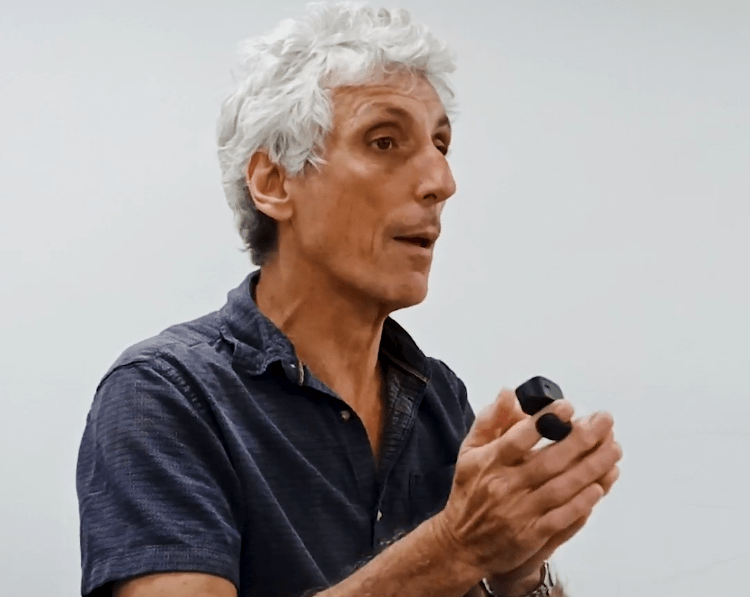

This access shield is what we know today as the ECDSA (Elliptic Curve Digital Signature Algorithm) digital signature algorithm. It is the invisible gatekeeper that ensures that only the owner of a private key can authorize a movement of funds. However, in an exclusive communication with CriptoNoticias, Joaquín Keller, a specialist trained in quantum computing in France, puts a figure on the table: 500,000 physical qubits (or about 500 logical qubits) to violate the Bitcoin algorithm, a milestone that the industry is rapidly pursuing.

It must be taken into account that in the quantum world, errors are the norm. Therefore, a physical qubit is like a switch that constantly fails. So to get a reliable logical qubit, the system groups thousands of physicists and makes them ‘vote’. If the majority points in one direction, the result is considered valid. This massive redundancy explains why the threshold of 500 qubits of real calculation It requires, in practice, an infrastructure of 500,000 physical units working in parallel.

That number represents the inflection point where quantum power could ‘guess’ the code of our current BTC safes. Keller warns that while Bitcoin is impregnable today, the speed of technical progress suggests that this risk threshold for the ECDSA algorithm could be reached sooner than projected, forcing the network into a mass migration to a new shielding standard.

Urgency or caution for Bitcoin?

Keller highlights the imminence of the challenge by pointing out that “the situation is urgent for Bitcoin. In 2024 we reached the milestone of the first logical qubit with Google’s Willow processor, and in 2025 Quantinuum already reported 50 logical qubits with its Helios system.

According to current estimates, Bitcoin’s digital signature could be compromised starting at 500 logical qubits. “Personally, I do not rule out that this scenario materializes before 2029,” he said.

But the challenge is not only technical, but operational. Migrating Bitcoin to new shielding standards comes at a price. Regarding this, Keller, who is currently in academic work at the Central University of Venezuela (UCV), puts it into perspective.

The problem is that by essence bitcoin has no central authority to make decisions and impose a solution. Reaching consensus in this context is a slow process. And some wallets may not have a living owner to migrate them, I think for example the wallet of Satoshi, creator of bitcoin.

Joaquin Keller.

It is also worth keeping in mind that migrating Bitcoin to post-quantum algorithms implies an operational commitment in which digital signatures would be significantly larger. This would result in heavier transactions, an increase in fees for the use of block space, and a greater challenge to network scalability.

Breaking bitcoin is not a linear task

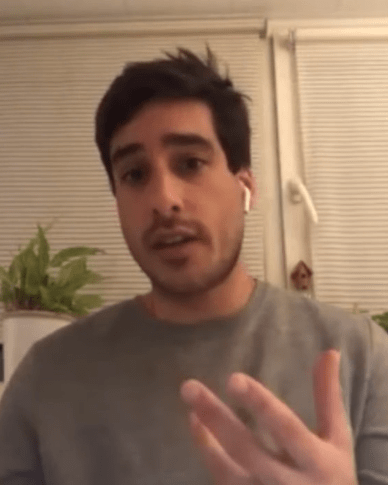

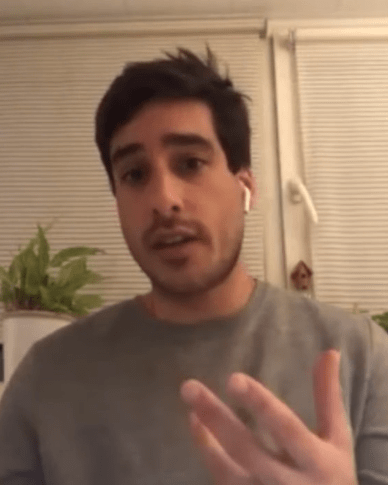

Against this backdrop, Alejandro De La Torre, CEO and co-founder of DMND Pool and a recognized voice in the industry, brings a dose of pragmatism. For him, the threat remains a long-term field of research and the 2029 horizon functions more as a reference point than a signal of collapse.

“I do not consider that it represents an immediate operational risk,” he told CriptoNoticias, highlighting that the industry is already proactively exploring post-quantum schemes. De la Torre is on the cautious side, remembering that Bitcoin currently has no visible cracks.

Although Google’s 2029 horizon may serve as a benchmark for coordinating efforts, there is currently no concrete evidence that Bitcoin’s cryptography is close to being breached in the short term. In that sense, I am more in the camp of those who think that there is no reason to be alarmed right now, but at the same time I recognize that it is sensible to continue promoting work in post-quantum schemes.

Alejandro De La Torre.

Other community members expanded the exchange with technical analysis. The founder of the NGO Bitcoin Argentina, Rodolfo Andragnes, Indian that the risk is especially concentrated in Legacy wallets that have spent funds, but still have a balance, and noted that “it is not about running away like Chicken Little shouting ‘the sky is falling’, but yes, as a community, and like Google, continue to pay attention to these advances, explore solutions and understand in advance how to protect your own BTC.”

Analysts such as Colombian bitcoiner BTCAndrés also joined, who put the focus in the physical barriers that still hold back quantum “supremacy.”

In their view, the risk is not imminent due to critical limitations in the entanglement of logical qubits and the short coherence times, the length of time a quantum computer can maintain information before the environment corrupts it. These technical obstacles suggest that, although hardware advances, the stability necessary to break Bitcoin remains a major challenge.

After all, the debate remains open with no formal migration plan on the horizon. While software giants already shield their applications with post-quantum standards, Bitcoin remains faithful to its essence. This is because it is a decentralized update mechanism that does not respond to external pressures, but rather to the majority agreement of its community.

The sector now observes a delicate balance. On the one hand, the acceleration in processing power; on the other, the solidity of the code and a protocol that prefers caution rather than hasty reform. In the end, the real race is between technological evolution and the ability of decentralized systems to adapt without losing their identity.