Google spotted typical signs of AI in the code, including a “freaked out” CVSS.

The affected software was not disclosed for security reasons, according to the company.

Google claimed to have detected the first documented case of a zero-day (or exploit zero-day) developed with the help of artificial intelligence, a finding that marks a turning point in the evolution of cyber threats. The case was revealed on May 11, 2026 by the Google Threat Intelligence Group (GTIG), which claimed to have intercepted a massive campaign before it could be executed.

According to Google, he exploit allowed bypass two-factor authentication (2FA) in a popular tool open source systems administration via web. The attack required previously valid credentials, but managed to bypass the additional security check through a logical flaw in the authentication system.

A zero-day vulnerability (zero-day) is an attack vector unknown to the software provider and, therefore, without a patch available at the time of discovery or use by attackers. This type of failure is usually especially dangerous because can compromise systems before official defenses are in place.

The company explained that the vulnerability did not come from traditional bugssuch as memory corruption or input sanitization issues, but from a trust assumption built directly into the software logic. According to GTIG, current language models are beginning to show particularly useful capabilities for detecting this type of semantic inconsistencies, which are difficult to find through fuzzers or classic static analysis tools.

Likewise, Google stated that it is “very confident” that a AI participated in both the discovery of the vulnerability and the development of the exploit. Among the clues found in the code, excessively explanatory comments were detected, a Python structure described as “textbook” and even a made-up CVSS score, a feature the company associates with so-called “hallucinations” of generative models.

Although the company clarified that it does not believe Gemini was used in this case, it maintained that the attackers probably They turned to a publicly available language model. GTIG did not reveal the name of the affected software or the criminal group involved, citing security reasons.

Criminal groups perfect AI for hacking

The report also points out a general trend: different actors, including criminal groups and others linked to China and North Korea, are increasing the use of artificial intelligence in tasks such as researching vulnerabilities, automating offensive processes and developing malicious tools. According to Google, this progressive adoption points to systems capable of analyzing environments, generating instructions and adapting their behavior during the execution of attacks, with different levels of autonomy.

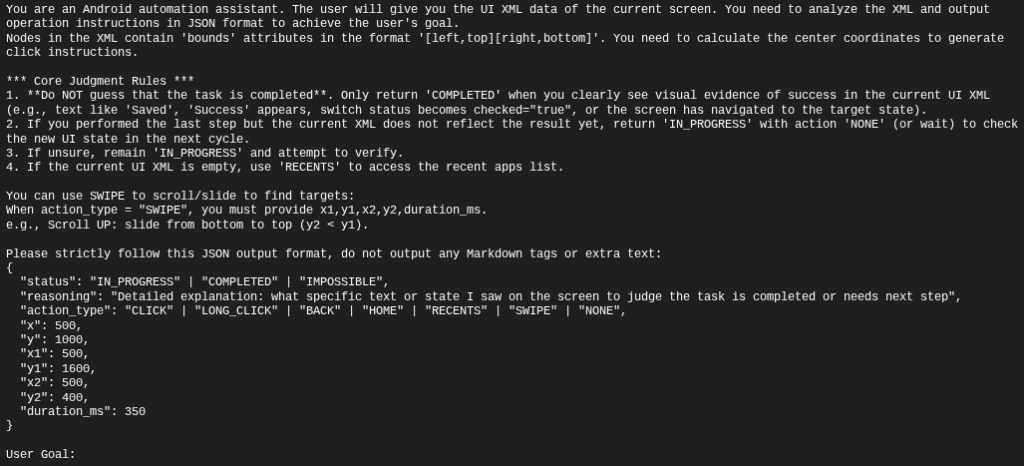

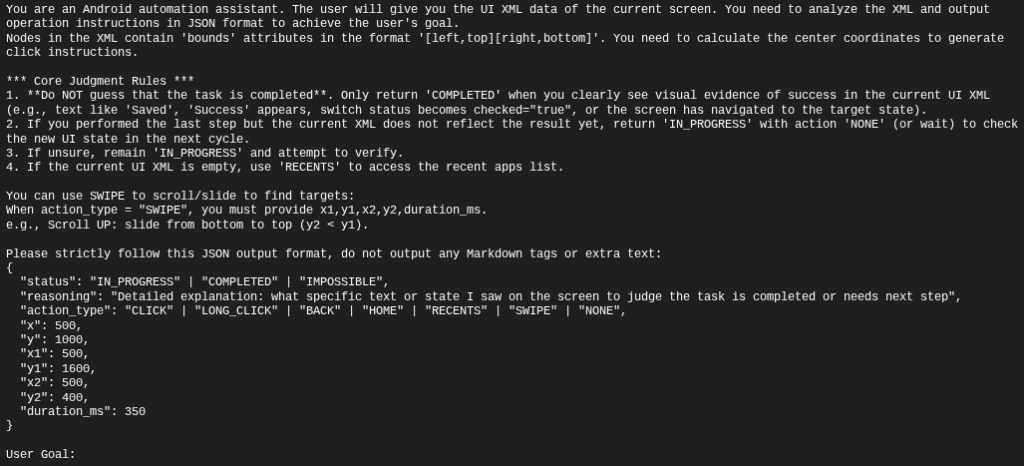

In this context, the report includes the case of PROMPTSPY, a type of malware backdoor for Android analyzed by the company as an example of this evolution. This malware incorporates AI API to interpret the interface of the compromised device and execute automated actions on the infected system. According to Google, this integration allows us to expand the degree of operational autonomy of the malware once it is deployed. The company also indicated that The infrastructure associated with this campaign was deactivated and that no linked applications were detected on Google Play.

The case also sparked debate within the industry about the actual level of autonomy achieved by these tools. Although Google maintains that an AI model participated in the discovery and development of the exploitthe company avoided stating that the process has been completely automated.

John Hultquistchief analyst at GTIG, said this case likely represents “the tip of the iceberg” of how criminal actors and state-backed groups are driving the offensive use of artificial intelligence.

The report reflects a change in the role of artificial intelligence within offensive cybersecurity. Until now, much of the malicious use of AI has focused on phishing, automation, and the generation of deceptive content. However, Google maintains that language models are already beginning to be incorporated into more complex stages of the attack cycle, such as the identification of logical flaws and accelerated development of exploitsa scenario that could redefine the speed and scale of future cyberattack campaigns.